In this 5-part blog series I will show you how to deploy NSX-T in your (lab) environment. This is a very basic setup and is more of a reference to the different steps involved, rather than a best-practice guide.

Setup

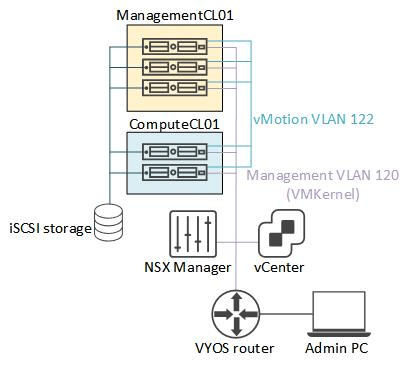

Before we get started with NSX-T let me show you the environment I’ve set up for this purpose. It’s all nested ESXi using William Lam’s excellent nested ESXi OVA’s. It’s all vSphere 7 and using vCenter 7, we’re deploying NSX-T 3.

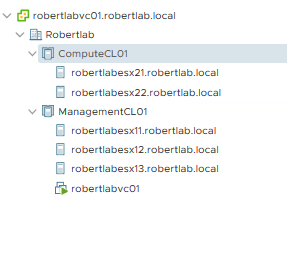

I’m starting with 3 hosts in a management cluster , and 2 hosts for compute resources. For now I’ve not deployed any KVM hosts (yet).

As you can see, I haven’t deployed any workloads either, I’m really starting from scratch here.

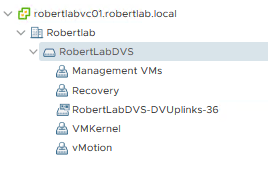

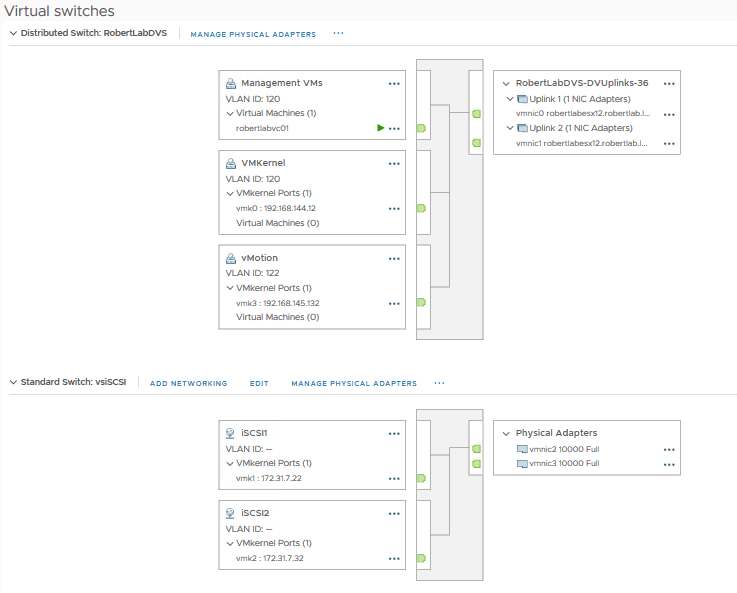

I have deployed one Distributed vSwitch (DVS, or virtual Distributed Switch, vDS), version 7.0. It is necessary to use this version because it allows us to leverage the NSX-T Portgroups.

Each host has 4 “physical” uplinks (they’re nested ESXi, so physical is dubious). Two of the uplinks are allocated to the DVS. The other two are connected to a standard vSwitch (VSS) for the physical iSCSI storage (this really is a physical SAN).

The uplinks are connected to the physical ESXi via trunk ports, which in turn connect to a (virtual) VYOS router. This, finally, gives us north/south connectivity.

A picture says a thousand words, so here is a diagram:

VLANs

Before we get started a quick table showing the different VLANs I use in my environment:

| Network | VLAN | Purpose/contents |

|---|---|---|

| 192.168.144.0/24 | 120 | ESXi VMkernel, vCenter, NSX manager, Edge management IPs |

| 192.168.145.0/25 | 121 | unused |

| 192.168.145.128/25 | 122 | vMotion |

| 192.168.146.0/25 | 123 | unused |

| 192.168.146.128/25 | 124 | TEPs/encapsulated traffic |

| 192.168.147.0/25 | 125 | Transit/uplink network |

| 192.168.147.128/25 | 126 | unused |

| 192.168.148.0/23 (divided into 4x /25) | GENEVE | VMs inside overlay |

An interesting one here is VLAN 124 (and also the one we’ll be using below), this is the VLAN wherein our TEPs will reside, and as such also the VLAN that all the VM-VM traffic will “use”. Because Geneve is an encapsulation protocol the physical network will only see this VLAN and its subnet, even though the VMs itself will reside in the 192.168.148.0/23 network. The VM traffic will be encapsulated and sent from one ESXi hosts’ TEP to the destination ESXi TEP.

This is why NSX is such a powerful product; you can design any network you want in the virtual world, and the physical world will only ever see the TEP-TEP traffic. More on that later.

Deploying the NSX-T Manager

With our environment clean and ready to go, we can deploy the first NSX-T Manager.

As with NSX-V controllers (and crowds) the NSX-T manager normally comes in clusters of three, but for lab environments it is OK to just deploy a single manager. You’ll get some warnings, but they can be safely ignored, it will not impact the workings in any way. Do not do this in production, obviously.

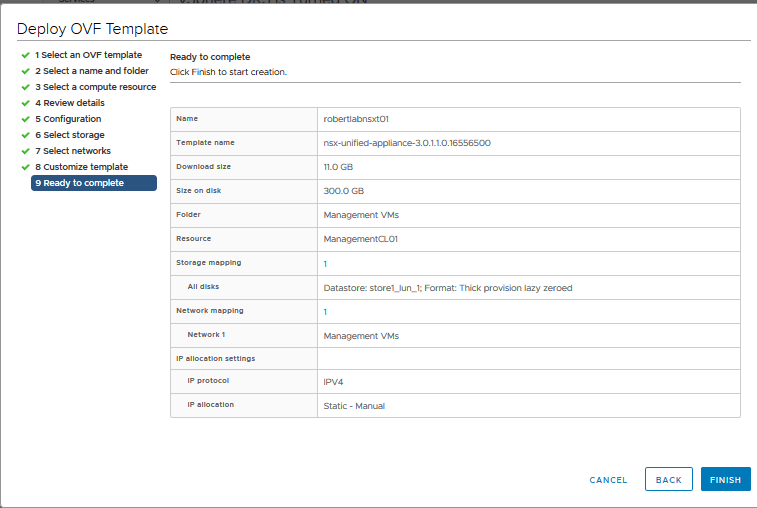

The first step is to simply get the NSX-T OVA and deploy it in your environment, following all the steps.

Patiently wait until the deployment is done, then power up the VM, then wait patiently some more. Or be like me and keep refreshing the page until it finally shows up!

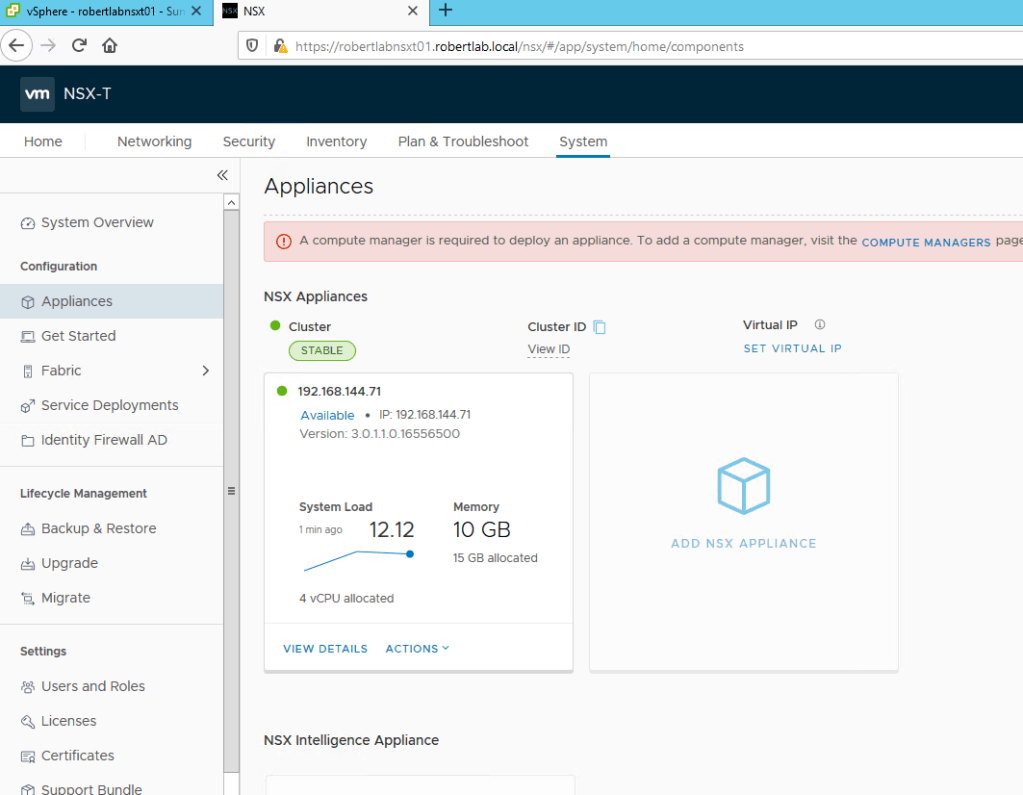

On the System -> Appliances tab you should see a happy one-member cluster of NSX-T managers! Well, with one big warning we’re going to address immediately.

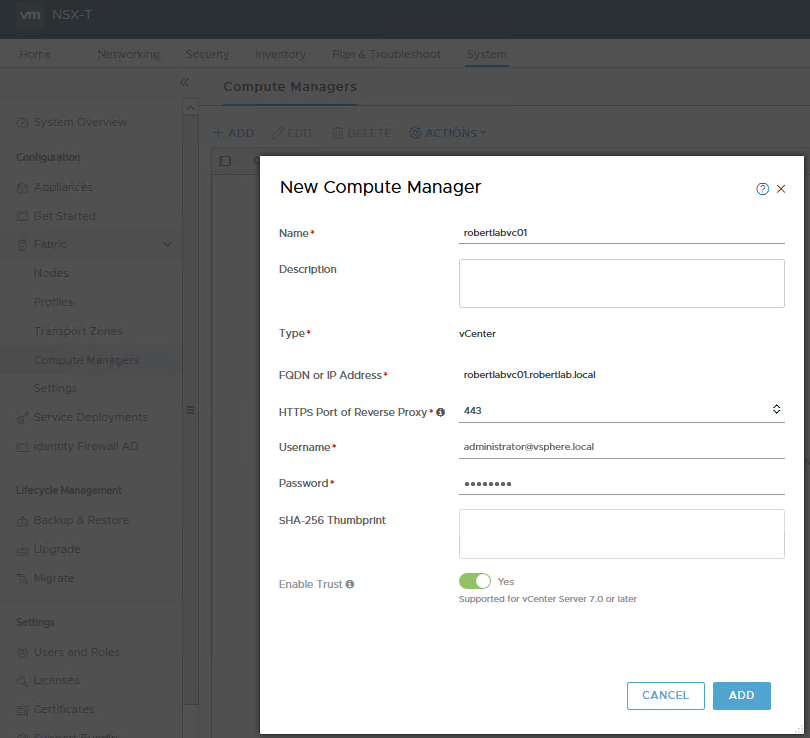

The Compute Manager is the vCenter you want to tie your NSX-T Manager to. Simply go to System -> Fabric -> Compute Managers and click ‘+ADD’. Next, fill in the vCenter details you want to connect to. Click ‘Enable Trust’, this enables a 2-way trust between vCenter and the NSX-T manager, used for Kubernetes.

After a few seconds it should show up in the table and become green.

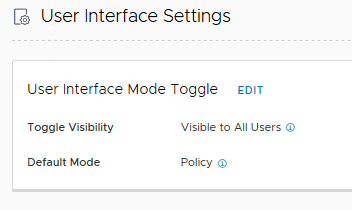

Don’t forget to enter your license under Settings. One final protip, go to User Interface Settings and change the Toggle Visibility setting to visible, that way you can swap between the policy/new and the manager/old mode:

And that’s it for this post! Next time we’ll start configuring our environment with profiles and define clusters and nodes, stay tuned!

Hello Robert,

Do i must put edge node to another cluster in Vcenter ? Can we put edge node to same workload cluster ?

LikeLike

Hi! You can definitely put everything into the same vSphere Cluster, no problem! This is what is known as a “collapsed cluster”. More info here: https://docs.vmware.com/en/VMware-NSX-T-Data-Center/3.2/installation/GUID-3770AA1C-DA79-4E95-960A-96DAC376242F.html

LikeLike

Hi Robert,

I’m planning to build the setup as you explained. Could you please elaborate the sentence “The uplinks are connected to the physical ESXi via trunk ports, which in turn connect to a (virtual) VYOS router. This, finally, gives us north/south connectivity. ”

1. You mean uplink of the nested ESXi connect to the Physical ESXi, could you please explain this configuration?

2. Do we want to create all these VLANs in the VYOS rouer?

LikeLike

Hi! Apologies for the delay, I recently switched jobs and haven’t made a new lab yet – I was planning to do a post about your question 🙂

To answer your questions:

1. It helps to forget the physical ESXi hosts when it comes to the labs, they merely pass through the data, and focus on the nested ESXi, as that is what the environment actually is. So the uplinks I’m talking about in the blog is the nested ESXi to the VYOS. As long as the VLANs can reach both points, it’s fine.

2. All VLANs must be available on the physical switch so that it can move the traffic based on the tags, and the VLANs which require a default gateway, e.g. the routed VLANs, must be created on the VYOS. So that would be the VMkernel/management VLAN, and the transit/uplink VLAN. THAT SAID; for some networks you must give a default gateway, even if you don’t intend to route this network, and if that doesn’t exist you’ll see a large number of ARP requests for the default gateway. So personally I just create all of them 🙂

Hope that helps!

LikeLike