Now that we’ve got the easy stuff out of the way, time to focus on what NSX-T is really about: networking. In this post I will walk you through the creation of the transport zones, some profile creation, and the preparation of your ESXi environment. Next post we’ll be adding routers to the mix!

A lot of the primary configuration happens in the ‘System’ tab of NSX-T, so that’s where we’ll be spending most of our time today.

Step 1: Transport Zone

As in NSX-V, the ‘base layer’ of your networking setup is the Transport Zone. The transport zone defines the span of the logical network, basically which transport nodes participate in the same network. Transport Nodes are the ESXi hosts that handle the east/west traffic, which in our environment is all of them.

There are 2 types of transport zones: overlay and VLAN.

- Overlay is what in NSX-V was VXLAN, and in NSX-T it is Geneve, which is a similar, but different, encapsulation protocol. This is your east/west connectivity.

- VLAN is just that; VLAN connectivity, used at the Edge uplinks for north/south connectivity.

A transport node can be part of multiple transport zones. A logical switch can only belong to one.

This is not a security feature.

NSX-T has 2 default transport zones: nsx-overlay-transportzone and nsx-vlan-transportzone. Prior to NSX-T 3.1 transport zones were mapped to a set virtual switch. Now that is no longer the case, so we can simply use these zones.

This is a small environment, there is no need to create multiple transport zones. I’ve actually never seen multiple transport zones being used, even in a large enterprise, so only if you have a very specific need should you create more than just these 2.

Step 2: IP Address Pools

Much of the configuration done for NSX-T is built on the principle of consistency, reusability, and ease of growth. You define global profiles, global zones, and also global IP pools. The IP pool we have to create now is the one that gives out all the IP addresses to our TEPs. Kind of like DHCP and IPAM within NSX-T.

You can expand pools with multiple subnets, but it’s much easier to just define a large enough scope to accomodate any future growth and keep it consistent.

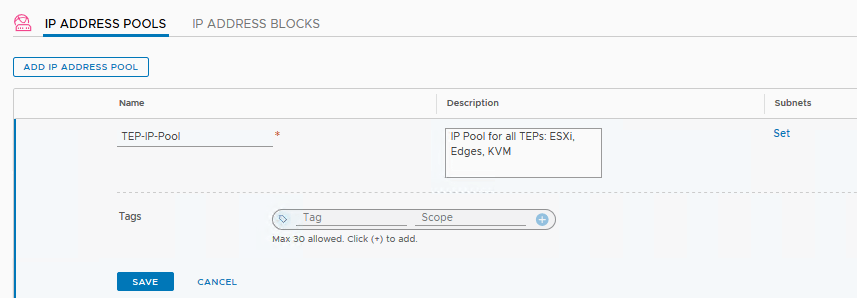

Go to the Networking Tab and click ‘IP Address Pools’, then click ‘Add IP Address Pool’. You could define an (CIDR defined) address block as well which you can then use in the pool, but I’ll define an IP range.

Input your details here, then click ‘Set’ to define a subnet.

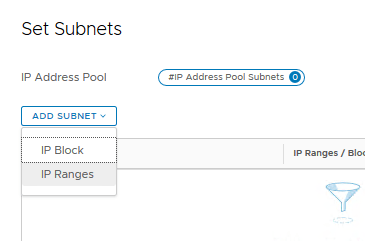

Here we could use an IP Block if we have created it, or in this case define an IP range.

If you input your range make sure you click ‘add item’ below it. This is a quirk that occurs more often in NSX-T. If you don’t click that, it’ll just ignore your input.

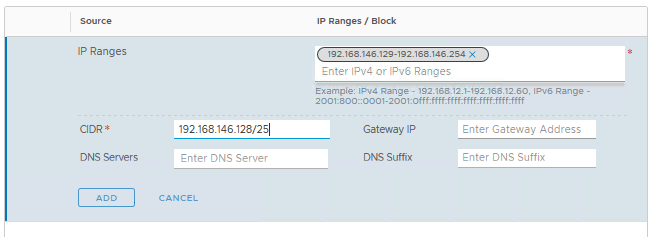

Then it shows up as an object in the list.

Add your netmask in the ‘CIDR’ field. All my hosts are located in the same L2 subnet, without any need for L3 connectivity, so I won’t define a gateway. Click ‘ADD’.

Great, our subnet has been added, click ‘Apply’.

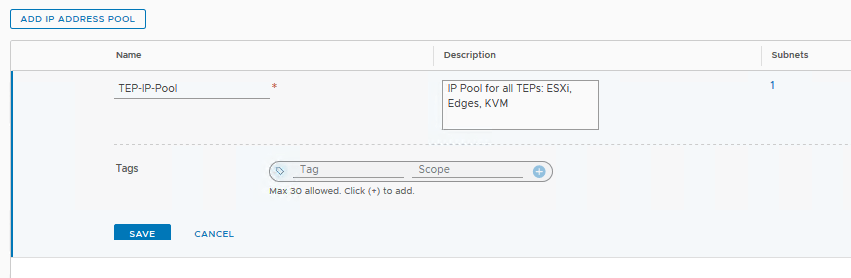

And now we see the ‘Set’ button has changed to a ‘1’. You can click that in the future to add more subnets, should you need it. We won’t bother with the tags for now, so click ‘save’.

Then we can see the ‘TEP-IP-Pool’ has been added and has the state ‘Success’. Awesome! Let’s go back to the ‘System’ tab.

Step 3: Profiles

This is the part where NSX-T gets all… NSX-T-ish. The big difference with configuring this product is that a lot of it is governed by profiles. This allows for a very clean, very consistent environment, but was tricky for me to get my head around. Especially the first time when you open up NSX-T thinking it is like NSX-V (it isn’t).

For now we’ll be looking at 2 profiles: Uplink Profiles and Transport Node Profiles

Uplink profiles

This is essentially the configuration that you’d normally do in your VDS or portgroup settings, via vCenter. Since this is NSX-T, you define the way the TEPs are connecting to the uplink via this profile.

I have to create my own uplink profile rather than use the ‘nsx-default-uplink-hostswitch-profile’ as I’d like, since I have to configure the VLAN, other than that it is the same. Click ‘+ADD’.

These are the settings we want to apply:

- MTU set to 1600 (default, so we leave it empty)

- Transport VLAN set to 124

- Teaming/failover set to ‘Load Balance Source’ and add both uplink-1 and uplink-2

Click ‘ADD’, and that’s it for the Uplink Profile, which we will apply in the next step.

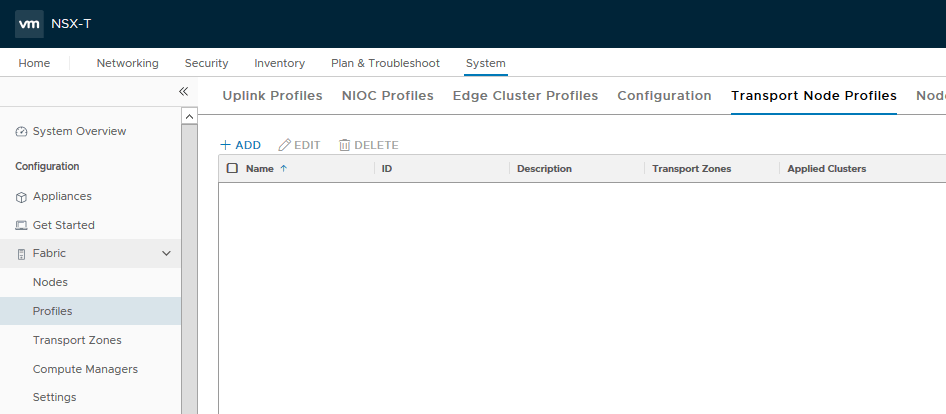

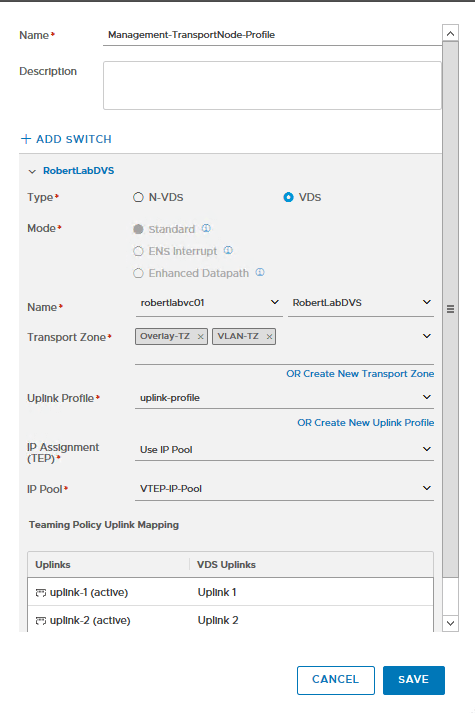

Transport Node Profiles

The Transport Node Profiles are the configuration which we push to our transport nodes. By doing it this way all the nodes will have the same configuration every time, and we won’t have to configure each node individually.

Since we’re running a collapsed design (documentation doesn’t take VDS7.0 into account, reader beware), we’ll have to create 2 Transport Node profiles; one for the Compute cluster which will only host NSX-T virtual networked workloads; and one for the Management cluster which will host some VMs, and also our Edge devices. Because of this, the Management cluster will have to be part of both our transport zones (VLAN and Overlay), whereas the Compute cluster will only need to be part of the Overlay zone.

Go to the respective tab and click ‘+ADD’. Note that there are no default profiles here.

Here it all comes together; the VDS, the transport zone, the uplink profile, and the IP pool.

Should we select the N-VDS option here the installation process will create the N-VDS for you. But we are using our previously created vSphere 7 VDS. That’s why the switch name in the Transport Zone doesn’t matter for us now.

Next choice is the ‘Mode’. Unless you have a very specific need for Enhanced Datapath, like NFV workloads and a need for DPDK capabilities, then you pick ‘Standard’. If those words didn’t mean anything to you, pick ‘Standard’. 99% of the cases, pick ‘Standard’. (In one version it was named ‘Performance mode’ which is just a disaster waiting to happen.)

For the other option to work you need very specific hardware and drivers, so if that’s not something that was specifically determined during hardware purchasing, go for ‘Standard’.

Note: in NSX-T 3.1 another option was added: “ENS Interrupt”, the same applies as above.

We’re first making our Management node profile, so next we select our ‘Overlay-TZ’ transport zone as well as the ‘VLAN-TZ’. For the Compute node profile we only do the ‘Overlay-TZ’. Then we choose our uplink-profile aptly named ‘uplink-profile’, then ‘Use IP pool’ with our ‘TEP-IP-Pool’ for IP Assignments. Then finally for each of the uplinks we click the dropdown and select our two uplinks we created for our VDS. Click ‘Save’.

We have created our Transport Node Profiles, which we will use to finally install NSX-T in our clusters!

We can apply the appropriate profile to our clusters that we want to have participate in our overlay network and get a consistent deployment for each of the hosts.

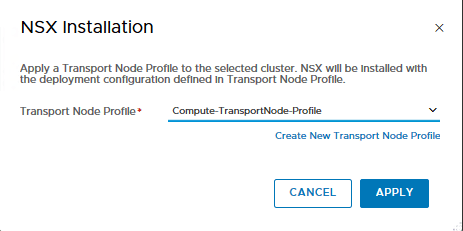

Step 4: Configure Host Transport Nodes

Now we will install NSX-T on our hosts. This is similar to NSX-V’s ‘Host Preparation’.

Go to ‘Nodes’ -> ‘Host Transport Nodes’. Then click the dropdown next to ‘Managed by’ and select your vCenter. Now you should see your clusters and hosts.

Now, what’s interesting here is that we have the option to deploy NSX-T on a cluster as a whole, or per host. If you select the checkmark before a host you will create all the things we have created so far from a workflow. So you could do it all from that one window, which is easier to do, a little complicated to explain, so that’s why I did it seperately.

Another reason why we did it in preparation rather than there is because we can use the already created profile to deploy NSX-T consistently to all the hosts in our clusters. Simply click the checkmark in front of the cluster you want to deploy it in (and only that checkmark), then click ‘Configure NSX’.

Then we are only presented with the option to select a Transport Node Profile, where we will select the one we have created for the cluster (in this case Compute).

Click ‘Apply’ and watch the magic happen.

Now NSX-T will apply all the configurations we have created to our hosts. Wait until it shows green dots and ‘Success’ and ‘Up’. If the installation fails it will tell you at what step it failed and why, and also a ‘Resolve’ button, allowing you to retry.

We’ll do the same for our Management/Edge cluster, since we’re using a collapsed design where our Edge Nodes are also located on hosts with workloads, so they have to participate in the network as well, i.e. they’re also transport nodes. If you have a seperate Edge cluster then you won’t have to prepare them as Transport Nodes, since they will only be used for Edge connectivity. But we’ll get into that in the next post.

If all goes well you will be able to see the VMkernel adapters add to the hosts, signifying they are ready to go.

Wrapping up

NSX-T is a bit different than NSX-V since a lot of the configuration occurs via profiles and general configs which you can apply multiple times, rather than configuring each device individually. This ties in to the whole ‘infrastructure-as-code’ paradigm that’s happening right now. Automation is the name of the game, and the only way to automate is if you have consistency.

It’s a little more complicated and involved, but once it’s all said and done it is much easier to manage and maintain.

Next post we will deploy our Edge Nodes and start tying our infrastructure together!

Great post Robert. I have a question.

I have only one cluster with all VMs and Cloud director workloads. Hope it will work normally. Created the transport node profile with overlay and vlan. Applied to the hosts.

Now the problem is only 3-4 hosts out of total 15 hosts are communicating with each other on MTU 9000. Able to communicate using MTU 1500 and 9000 MTU works for vmk0 (management vmkernel as well).

Not sure why its not communicating through vmk10. All hosts are connected to same switch and VLAN.

LikeLike

Are the Host TEPs and the Edge TEPs on the same subnet? I ran into an issue when preparing an environment I didn’t have the uplink segments as NSX-T segments, but as DVS portgroups instead. You need NSX-T segments for that to work.

LikeLike

First off great articles and one of the only walkthroughs that addresses a collapsed architecture on 3.x with a converged VDS design. The question I had was where did the Transport VLAN 124 come from, in looking at Part 1 of the series I don’t see that VLAN called out in the diagram and I am attempting to set up my own uplink profile and have hit a roadblock. Thanks for any help.

LikeLike

Thank you! I’ve added a short section to post 1 with the different VLANs and subnets I’ve used. Does that help? Good luck with your setup!

LikeLike

Yep! That straightened me out, appreciate the quick response.

LikeLike